- Myriad 4 2 1 – Audio Batch Processors

- Myriad 4 2 1 – Audio Batch Processor Software

- Myriad 4 2 1 – Audio Batch Processor Pdf

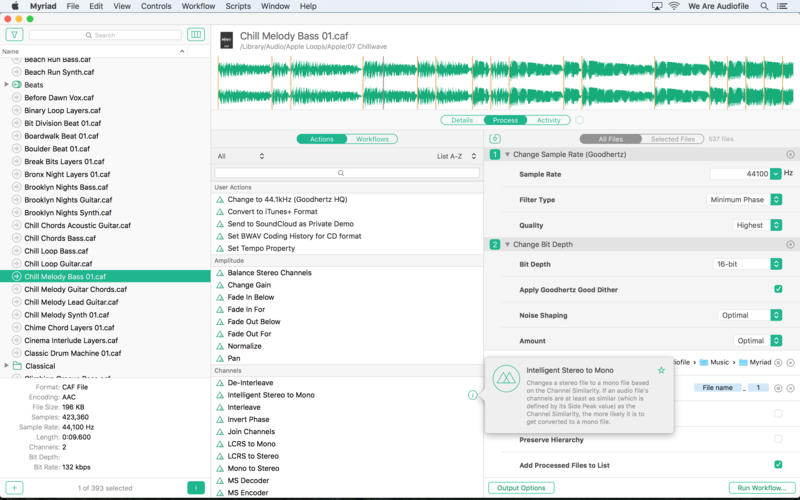

Batch processing can often be the bane of the producer’s existence. I can’t tell you how many times I’ve been an hour into trying to pull off a massive group edit of a large bunch of audio files and I’ve thought to myself, “I wish I just opened these one at a time and didn’t even try to do this batch edit”. Myriad 4.2.1 MAC Shark Nov 25 2017 15.6 MB. Myriad is, simply put, one of the best audio batch processors. Totally redesigned, it looks beautiful and delivers incredible performance. Let Myriad do the heavy lifting while you get back to doing what you do best: creating great sounds and music.

Download Myriad: Myriad is a powerful audio batch processor. Totally redesigned, it looks beautiful and delivers inc. Myriad 4.1.1 – Audio batch processor. August 17, 2017. Myriad is, simply put, one of the best audio batch processors. Totally redesigned, it looks beautiful.

To run the following benchmarks on your Jetson Nano, please see the instructions here.

Jetson Nano can run a wide variety of advanced networks, including the full native versions of popular ML frameworks like TensorFlow, PyTorch, Caffe/Caffe2, Keras, MXNet, and others. These networks can be used to build autonomous machines and complex AI systems by implementing robust capabilities such as image recognition, object detection and localization, pose estimation, semantic segmentation, video enhancement, and intelligent analytics.

Figure 1 shows results from inference benchmarks across popular models available online. See here for the instructions to run these benchmarks on your Jetson Nano. The inferencing used batch size 1 and FP16 precision, employing NVIDIA’s TensorRT accelerator library included with JetPack 4.2. Jetson Nano attains real-time performance in many scenarios and is capable of processing multiple high-definition video streams.

Figure 1. Performance of various deep learning inference networks with Jetson Nano and TensorRT, using FP16 precision and batch size 1

Table 1 provides full results, including the performance of other platforms like the Raspberry Pi 3, Intel Neural Compute Stick 2, and Google Edge TPU Coral Dev Board:

Model | Application | Framework | NVIDIA Jetson Nano | Raspberry Pi 3 | Raspberry Pi 3 + Intel Neural Compute Stick 2 | Google Edge TPU Dev Board |

|---|---|---|---|---|---|---|

ResNet-50 (224×224) | Classification | TensorFlow | 36 FPS | 1.4 FPS | 16 FPS | DNR |

MobileNet-v2 (300×300) | Classification | TensorFlow | 64 FPS | 2.5 FPS | 30 FPS | 130 FPS |

SSD ResNet-18 (960×544) | Object Detection | TensorFlow | 5 FPS | DNR | DNR | DNR |

SSD ResNet-18 (480×272) | Object Detection | TensorFlow | 16 FPS | DNR | DNR | DNR |

SSD ResNet-18 (300×300) | Object Detection | TensorFlow | 18 FPS | DNR | DNR | DNR |

SSD Mobilenet-V2 (960×544) | Object Detection | Metamovie 2 3 1. TensorFlow | 8 FPS | DNR | 1.8 FPS | DNR |

SSD Mobilenet-V2 (480×272) | Object Detection | TensorFlow | 27 FPS | DNR | 7 FPS | DNR |

SSD Mobilenet-V2 (300×300) | Object Detection | TensorFlow | 39 FPS | 1 FPS | 11 FPS | 48 FPS |

Inception V4 (299×299) | Classification | PyTorch | 11 FPS | DNR | DNR | 9 FPS |

Tiny YOLO V3 (416×416) | Object Detection | Darknet | 25 FPS | 0.5 FPS | DNR | DNR |

OpenPose (256×256) | Pose Estimation | Caffe | 14 FPS | DNR | 5 FPS | DNR |

VGG-19 (224×224) | Classification | MXNet | 10 FPS | 0.5 FPS | 5 FPS | DNR |

Super Resolution (481×321) | Image Processing | PyTorch | 15 FPS | DNR | 0.6 FPS | DNR |

Unet (1x512x512) | Segmentation | Caffe | 18 FPS | DNR | 5 FPS | DNR |

Table 1. Inference performance results from Jetson Nano, Raspberry Pi 3, Intel Neural Compute Stick 2, and Google Edge TPU Coral Dev Board

Myriad 4 2 1 – Audio Batch Processors

Myriad 4 2 1 – Audio Batch Processor Software

DNR (did not run) results occurred frequently due to limited memory capacity, unsupported network layers, or hardware/software limitations. Fixed-function neural network accelerators often support a relatively narrow set of use-cases, with dedicated layer operations supported in hardware, with network weights and activations required to fit in limited on-chip caches to avoid significant data transfer penalties. They may fall back on the host CPU to run layers unsupported in hardware and may rely on a model compiler that supports a reduced subset of a framework (TFLite, for example).

Jetson Nano’s flexible software and full framework support, memory capacity, and unified memory subsystem, make it able to run a myriad of different networks up to full HD resolution, including variable batch sizes on multiple sensor streams concurrently. These benchmarks represent a sampling of popular networks, but users can deploy a wide variety of models and custom architectures to Jetson Nano with accelerated performance. And Jetson Nano is not just limited to DNN inferencing. Its CUDA architecture can be leveraged for computer vision and Digital Signal Processing (DSP), using algorithms including FFTs, BLAS, and LAPACK operations, along with user-defined CUDA kernels.